“The future is already here — it’s just not evenly distributed.”

— William Gibson

Hello Cyber Builders 🖖

Anthropic made headlines last week. I paused before writing because I wasn’t sure what to make of it. Was this marketing? Where’s the evidence this is a real breakthrough?

Anthropic announced Mythos, a model built for cybersecurity, and kicked off Project Glasswing: an industry-wide push to use it to defend critical infrastructure.

The framing was striking.

Anthropic essentially said:

“We didn’t expect this level of capability. It may change the threat landscape.”

It’s a bold claim, and plenty of people accepted it at face value. I want to challenge the story, not the tech.

If you’ve been following this space for the past year, none of this should be surprising.

Let’s look at the facts. They matter.

Claude Mythos Preview, announced on April 7, 2026, is a general-purpose model that demonstrated something the security community needs to take seriously:

-

It found vulnerabilities in every major operating system and every major web browser

-

It uncovered a 27-year-old flaw in OpenBSD that could remotely crash systems

-

It found a 16-year-old vulnerability in FFmpeg that automated tools had tested millions of times without catching

-

On the CyberGym benchmark, it scored 83.1% vs. Opus 4.6’s 66.6%

-

In Firefox exploit development, Mythos produced 181 working exploits, compared to Opus 4.6’s 2 out of several hundred attempts.

The Mozilla Firefox team released Firefox 150 this week and praised a world without vulnerabilities:

As part of our continued collaboration with Anthropic, we had the opportunity to apply an early version of Claude Mythos Preview to Firefox. This week’s release of Firefox 150 includes fixes for 271 vulnerabilities identified during this initial evaluation.

That last stat is worth pausing on. This isn’t a small bump. It’s a leap from ‘sometimes works’ to ‘works almost every time.’

Anthropic is not releasing Mythos publicly. Instead, they launched Project Glasswing, a defensive coalition of 11 major organizations: AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks. The model is used to find and fix vulnerabilities in critical infrastructure before attackers can exploit them, supported by $100M in model usage credits and $4M in donations to open-source security organizations.

The message is: we built something powerful enough to warrant a coordinated industry response, not a product launch. That’s worth taking seriously, and it is a responsible approach.

But here’s what I think is the most important sentence in the entire Mythos announcement:

“Capabilities emerged as a downstream consequence of general improvements in code, reasoning, and autonomy — rather than explicit security training.”

I want to challenge this framing, clearly and directly.

Of course, Anthropic models are trained in cybersecurity. You don’t build a model that understands heap overflows, integer overflows, race conditions, KASLR bypasses, and ROP chains by accident. That knowledge had to be in the training data and reinforced.

The real shift isn’t the knowledge itself. It’s how the model reasons with it at scale: chaining vulnerabilities, navigating big codebases on its own, and writing exploits that actually work.

And critically, this was already a deliberate direction. Back in October 2025, I wrote about Claude 4.5 and what was visible in the model card. Anthropic had intentionally enhanced code analysis, vulnerability detection, and patching capabilities. They described cybersecurity as a primary use case, the first time that framing appeared in a release announcement. They were already evaluating against CyBench.

This started six months ago, not last week.

The “aha moment” narrative doesn’t hold up when you look at the trajectory. Mythos is the acceleration of a deliberate program, not an unexpected side effect of making Claude smarter at coding.

Three years ago, the signal was smaller, but the direction was already there.

GPT-4 wasn’t yet producing polished end-to-end exploit chains at the level we see now, but it clearly demonstrated something that should have caught the security community’s attention: a general-purpose model had extensive knowledge of cybersecurity, vulnerable code, teaching about flaws, and explaining most basics and advanced use cases.

I tested GPT-4 3 years ago against the CEH exam, and the knowledge was already there.

It was an early indicator that vulnerability discovery was shifting from a craft practiced by few experts into a capability software could increasingly augment at scale.

The breakthrough wasn’t that AI suddenly understood security. It was that language models would become good enough at code, context, and reasoning that cybersecurity would become a natural downstream consequence.

Here we are 3 years later.

AI has been helping find real-world vulnerabilities for over a year. Mythos didn’t start this, and Anthropic won’t be the last.

The model’s reasoning can now reflect on findings and draw correlations. The “Thinking” mode helps find real-world vulnerabilities. This is where Mythos and other released models are really helping.

If you want to understand what’s actually happening in the field right now, follow Simon Willison. He’s been doing extraordinary work curating the signals that matter — his quotations page alone is worth bookmarking. What he’s been collecting from the open-source world over the past few weeks is remarkable.

Start with the platform layer. Kyle Daigle, COO of GitHub, put some numbers out that should make your jaw drop:

“GitHub platform activity is surging. There were 1 billion commits in 2025. Now, it’s 275 million per week, on pace for 14 billion this year if growth remains linear (spoiler: it won’t.)”

That’s the rising tide: more code, more activity, more places for bugs to hide. Now, let’s look at security.

Greg Kroah-Hartman, one of the Linux kernel’s most senior maintainers, described a before-and-after that is striking:

“Months ago, we were getting what we called ‘AI slop,’ AI-generated security reports that were obviously wrong or low quality. It was kind of funny. It didn’t really worry us. Something happened a month ago, and the world switched. Now we have real reports. All open source projects have real reports that are made with AI, but they’re good, and they’re real.”

“Something happened a month ago, and the world switched.” That’s not a benchmark result. That’s a frontline maintainer describing a phase change.

Daniel Stenberg, the lead developer of cURL, is living the same reality:

“The challenge with AI in open source security has transitioned from an AI slop tsunami into more of a plain security report tsunami. Less slop but lots of reports. Many of them really good. I’m spending hours per day on this now. It’s intense.”

And Willy Tarreau, lead developer of HAProxy, adds the numbers:

“On the kernel security list we’ve seen a huge bump of reports. We were between 2 and 3 per week maybe two years ago, then reached probably 10 a week over the last year, and now since the beginning of the year we’re around 5 to 10 per day. Most of these reports are correct, to the point that we had to bring in more maintainers to help us.”

Five to ten real reports a day. Sometimes, different AI tools find the same bug on their own. It’s a real signal, and it’s happening at scale.

The future is already here, just like William Gibson said. It showed up first in open-source bug trackers, not in press releases.

This is hands-on for me.

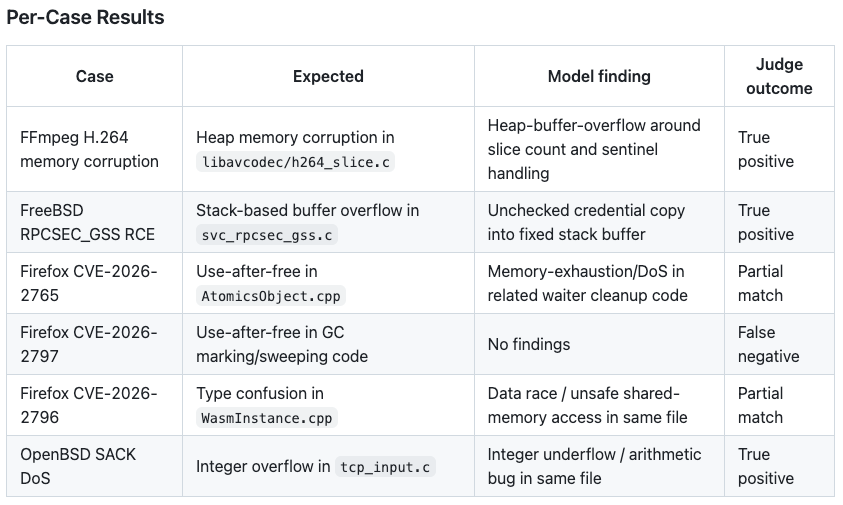

Over the past weeks, I’ve been running AIVulnBench, a small reproducible benchmark I built to test whether AI coding agents can identify real vulnerabilities in open-source code given the relevant source files.

The benchmark :

-

runs agents against checked-in vulnerability datasets — real CVEs from real codebases.

-

Scores their ability to identify the issue without being given the answer.

-

normal: includes benchmark context, expected vulnerability type, and report-derived task framing. -

hardRemoves hints, case IDs, vulnerability types, and report context from the model prompt. The runner receives only the materialized files and a generic vulnerability-audit instruction. -

Support multiple “runners”: A runner is an AI-coding harness. For the moment, only OpenAI Codex is implemented (and was used to implement the benchmark), but I’d like to see the same for Gemini CLI or Mistral Vibe.

The OpenAI Codex, using the standard GPT-5.4 model, not their “cyber” flavors, results are pretty interesting:

While I was coding this, the AISLE security research team has published two very interesting articles on the same benchmark:

First, they demonstrate that an Open Source Small Language Model can also detect the same issues in code as Mythos does. Second, they developed a simple scanner and an AI harness, and, to prove their point, used GPT-5.4-nano, an entry-level model similar to OSS models.

We reported several bugs to FreeBSD through both the public kernel mailing list and responsible disclosure to the security team. Some have already been confirmed by maintainers, others are under active investigation. GPT-5.4-nano, a model incapable of following output format instructions reliably, was able to find real, previously unfixed bugs in the FreeBSD kernel, an actively maintained operating system.

Confirmed: two bugs in NFS RPCsec_gss, the same subsystem as CVE-2026-4747. The scanner flagged

svc_rpc_gss_update_seq(), the same RPC code where Mythos found its flagship vulnerability. A missing 2017 Coverity fix (undefined behavior whenoffset == 32) and a TOCTOU race condition enabling an out-of-bounds write. Rick Macklem, the FreeBSD NFS maintainer, confirmed the first bug, is committing the fix, and is listing us as author. The full exchange is public. That alone shows the scanner surfaces real issues in production kernel code from a full, unguided scan.AISLE Blog – System Over Model: Zero-Day Discovery at the Jagged Frontier

All these tests confirm that the direction was established some time ago. Mythos illustrates how rapidly developments are occurring and what lies ahead in the cybersecurity field. It represents an acceleration, not the start of something new.

If you train a model on deep security data, reinforce it with security tasks, boost its code reasoning, and embed it in an expert-knowledge harness, you end up with a system that can chain vulnerabilities using advanced techniques.

This trend has been obvious for a while.

To conclude this post, I think the conversation, including Anthropic’s, misses the mark. Finding vulnerabilities is only one piece of the security puzzle. You can discover 10x more vulnerabilities and still be less secure if your organization can’t fix them.

The bottleneck is no longer:

“Can we find vulnerabilities?”

It’s now:

“Can we manage, prioritize, and remediate them faster than they’re being discovered?”

Security is about governance, remediation, hardening, patch management, risk prioritization, developer workflows, and organizational behavior. None of that is automated. None of that gets easier just because the discovery rate goes up.

We see this every day in AppSec. A SAST tool flags 5000 issues. The team triages 100. They patch 10. The rest drift into a backlog that no one has time to close.

More findings don’t mean more security. They can mean more noise, more backlog, and a bigger risk of missing what matters. That’s the real tension no one is talking about.

I’ll dig deeper into this in my next post. First and foremost, because Cybersecurity is not just “Application Security” or finding vulnerabilities in code. This is a very “tech startup” view of the world. Cybersecurity is governance, security operations, identity management, audit, penetration testing, and more.

I wanted to break down why the ‘AI as offensive threat’ story is missing key points, where the real risks are, and how builders should adapt as bug discovery becomes a commodity.

If you’re working in this space—detection, remediation, or anything in between—let’s connect. Reply here or ping me on LinkedIn.

Mythos is not the beginning of a new era. It’s just a visible milestone in a trend that’s been rolling for a while. The real challenge isn’t just finding more bugs.

It’s building software and IT systems that can actually fix them.

Laurent 💚