TL;DR

Using custom CUDA kernels and speculative decoding optimized for reasoning workloads, we achieved 414 tokens per second throughput on Kimi K2.5 running on Nvidia B200 GPUs, making us one of the first providers to reach 400+ tokens per second on a trillion-parameter reasoning model.

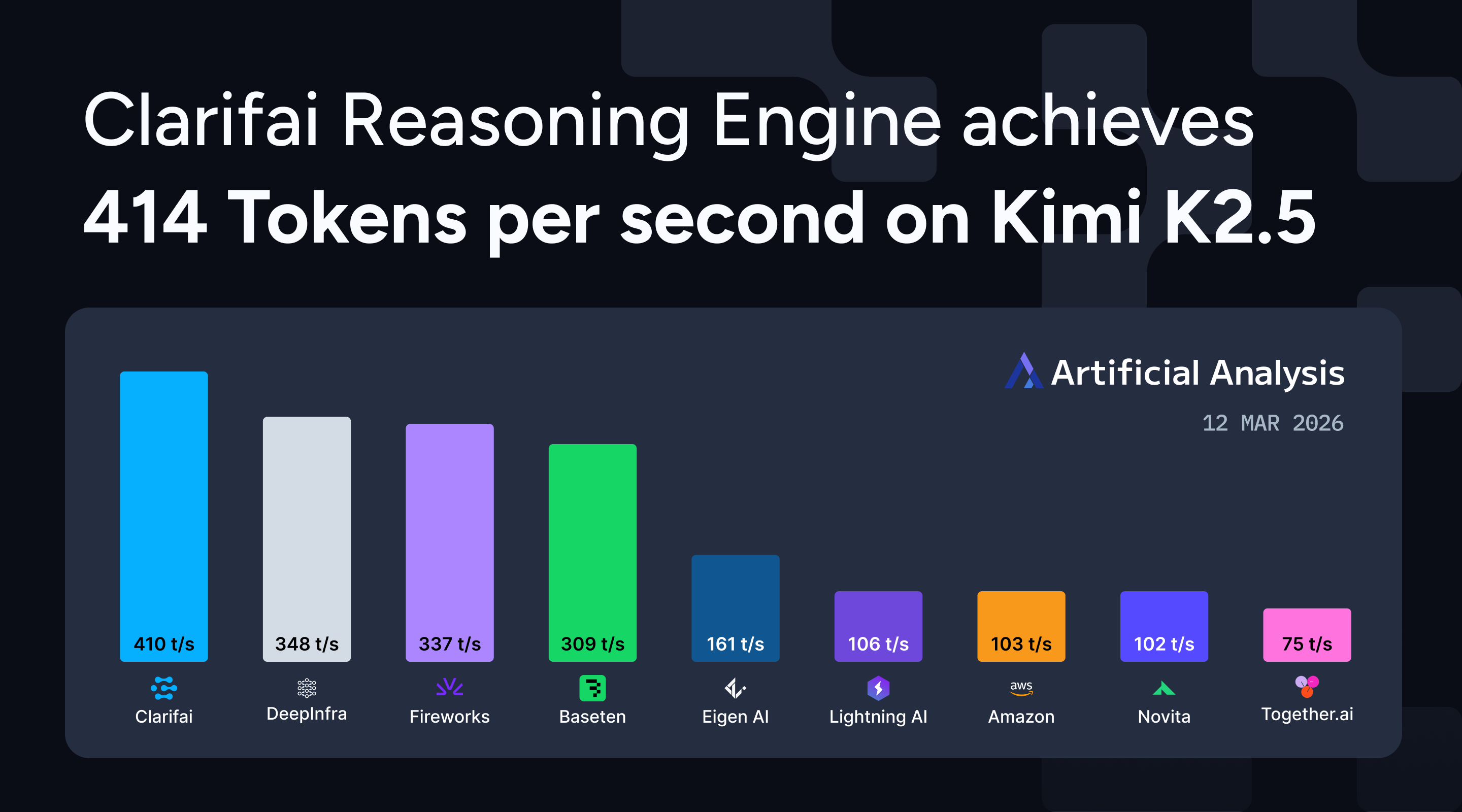

Ahead of Nvidia GTC, we’re excited to share that Clarifai Reasoning Engine achieves 414 tokens per second (TPS) throughput on Kimi K2.5, positioning us among the top inference providers for frontier reasoning models as measured by Artificial Analysis. Running on Nvidia B200 GPU infrastructure, our platform delivers production-grade performance for agentic workflows and complex reasoning tasks.

Figure 1: Clarifai achieves 414 tokens per second on Kimi K2.5, ranking among the fastest inference providers on Artificial Analysis benchmarks.

Why Kimi K2.5 performance matters

Kimi K2.5 is a 1-trillion-parameter reasoning model with a 384-expert Mixture-of-Experts architecture that activates 32 billion parameters per request. Built by Moonshot AI with native multimodal training on 15 trillion mixed visual and text tokens, the model delivers strong performance across key benchmarks: 50.2% HLE with tools, 76.8% SWE-Bench Verified, and 78.4% BrowseComp.

As a reasoning model, Kimi K2.5 generates extended thinking sequences before final answers. Clarifai achieves a time to first answer token of 6 seconds, which includes the model’s internal thinking time before providing a response. Throughput directly impacts end-to-end response time for agentic systems, code generation, and multimodal reasoning tasks. At 414 TPS, we deliver the speed required for production deployments.

Figure 2: Time to first Answer token (TTFT) performance across inference providers, measured by Artificial Analysis with 10,000 input tokens.

How we optimize for throughput

Clarifai Reasoning Engine uses three core optimizations for large reasoning models:

Custom CUDA kernels reduce memory stalls and enhance cache locality. By optimizing low-level GPU operations, we keep streaming multiprocessors active during inference rather than waiting on data movement.

Speculative decoding predicts possible token paths and prunes misses quickly. This reduces wasted computation during the model’s thinking sequence, a pattern common in reasoning workloads.

Adaptive optimization continuously learns from workload behavior. The system dynamically adjusts batching, memory reuse, and execution paths based on actual request patterns. These improvements compound over time, especially for the repetitive tasks common in agentic workflows.

Running on Nvidia B200 infrastructure gives us the hardware foundation to push performance boundaries, while our inference optimization stack delivers the software-level gains.

Building with Kimi K2.5

Kimi K2.5 is now available on the Clarifai Platform. Try it out on the Playground or via the API to get started.

If you need dedicated compute to deploy Kimi K2.5 and other similar top open models at scale for production workloads, get in touch with our team.